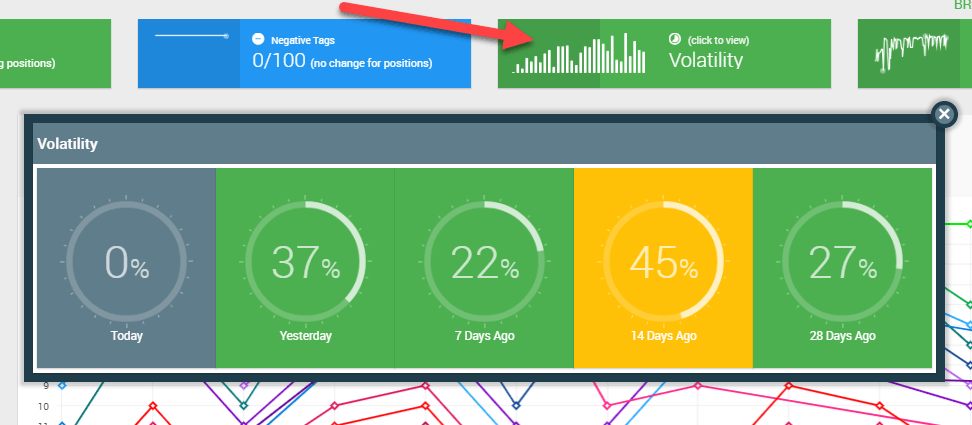

I'm a big fan of SERPWoo. At first, it was just cool to see the daily keyword fluctuations for the 100 top rankings which showed my clients the Google battlefield.

However, where the tool has come in handy for me is when working with the volatility %.

You might not focus on this SERPWoo feature in your daily work with the tool, but I hope that this article will motivate you to take a closer look.

Especially, if you work on a big website with hundreds of keywords to go after, the volatility % is critical for your future SEO results.

- Some keywords are easier to rank for than others.

- Some keywords will generate more stable, profitable traffic than others in the long term.

Imagine if you could identify on your SEO radar the specific keywords which you can actually rank for and bring you steady traffic. Imagine how they could move the needle for your business – or your clients' business – over the long term.

But it is essential that you first understand the competitive landscape in Google, and SERPWoo is key to getting there.

My solution:

I have developed a practical competition score to prioritize which keywords to focus on - helping you use your resources efficiently.

I call it the Google Volatility Score. We will dive into it in a moment, and I'll show you how to create your own!

But first...

Why Use A Keyword Competition Score?

You probably have already done your keyword research and mapped out which keywords to rank for on which URLs - or you might be looking at tens or even hundreds of URLs.

So where to start?

It is a daunting task to optimize dozens if not hundreds of URLs.

For Example: You may need to optimize 50 pages on your site for targeted keywords, and simple on-page SEO will take you 2 hours per page. Do the math and we are talking about 100 work hours.

In this calculation, we are not even including off-page SEO activities such as link building and the building of extra content to build topic clusters.

Fortunately, we have the 80/20 rule.

According to this rule we know that in the end, only 20% of our pages will generate 80% of the conversions.

So:

If you do not prioritize well, you could end up doing on-page SEO for the 80% of pages, which only generate 20% of the conversions.

You need to understand which pages have the most potential to get you organic traffic and conversions and prioritize them.

And that is why you need to calculate a competition score to understand how fierce the competition is. With a competition score, you can prioritize low hanging fruit first.

So which competition scores do we traditionally use?

3 OLD SCHOOL METHODS TO CALCULATING A COMPETITION SCORE

Most SEOs will never try to calculate a competition score but will instead use fluffy metrics such as keyword search volume or keyword ranking to prioritize efforts.

Fortunately you are not one of those SEOs! 😀

We want to have a competition score for each keyword.

Before SERPWoo I used the following 3 traditional methods in practice - most SEO use a mix at some level with additions of their own.

METHOD 1: INTITLE+INTEXT

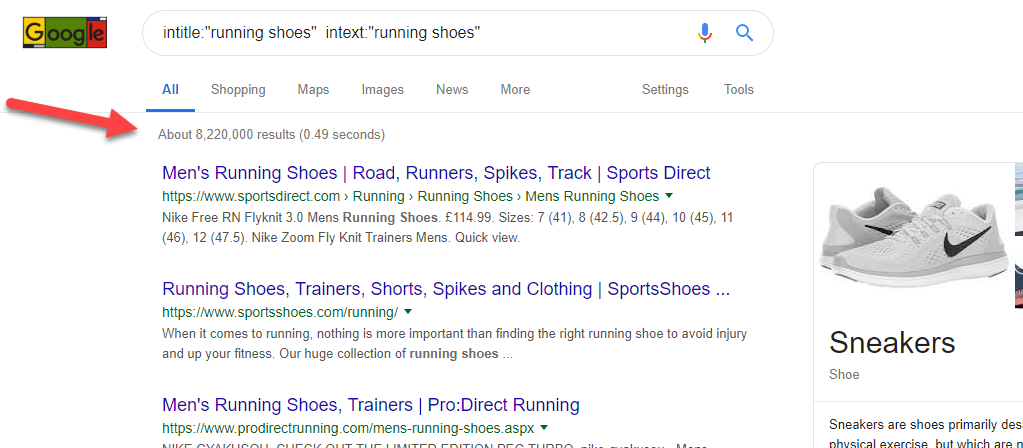

If you do an intitle+intext search in Google for the particular keyword, you can see how many search results have included the keyword in the title and in the main text.

This number indicates, how many sites try to rank for the keyword, hence giving us an idea of the level of competition.

Still, it does not reveal anything about the real competition on page 1 on Google.

METHOD 2: GOOGLE ADS DATA

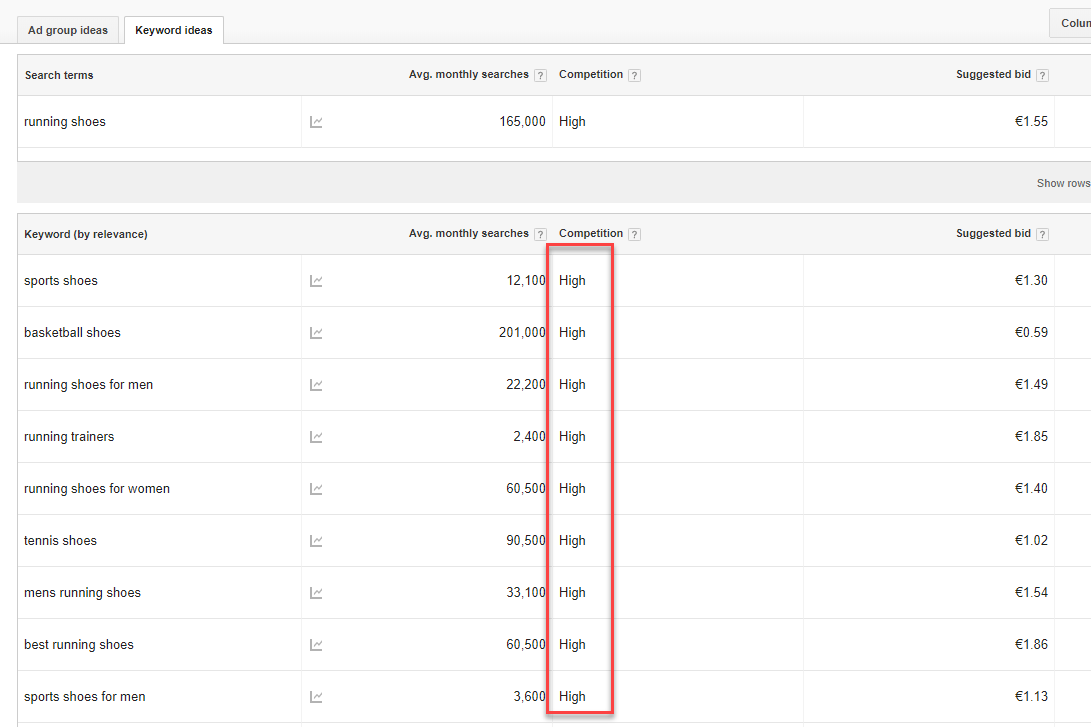

You can look at bid prices in Google Ads for a particular keyword. You can also check the number of bidders for the same keyword and use that as an indicator of the keyword difficulty.

In Google Keyword Planner Competition is defined as High, Medium or Low, but if you download data, you can see the number of advertisers.

Again, this is not the best metric, since Google ADs and organic traffic are two different silos.

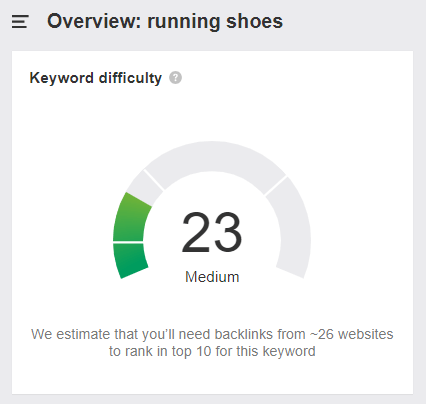

METHOD 3: KEYWORD DIFFICULTY SCORE

SEO tools such as Ahrefs, SEMRush or RankTracker all display a keyword difficulty score; e.g. the Ahrefs score is based on the number of links, which are required to rank on page 1. As we all know links are only a piece of the puzzle, so it is a very fluffy way to create a difficulty score.

While surely you can use the three methods above as an indicator of the level of the competition, they are only scraping the surface.

Friends, we need an alternative...

INTRODUCING THE GOOGLE VOLATILITY SCORE

Of course, we already know that there is a certain search volume for the keywords we wish to rank for, and we also know that the potential traffic could boost our business.

But what should we know andu use in a competitor score to really give us a competitive advantage?

- How strong are the competitors on page 1 in Google?

- How many fluctuations are there on page 1 in Google?

First off, we want to make sure that we are not facing Wikipedia and Amazon in the top 3. It surely will decrease our chances to get steady traffic from the keyword.

Secondly, let us talk about fluctuations. While I have used SERPWoo for some years, it was only when I saw one of Jason's videos that I realized how important volatility on page 1 in Google is.

Movements in rankings on page 1 in Google is an essential factor because it will tell us, what to expect when we aim for page 1. It is fine to get to the top of the rankings, however, we also want to make sure that we can rely on a steady source of traffic for a long time.

So here is my formula to calculate the competition score: (Volatility %) x (Avg. Ahrefs DR top 10) = Google Volatility Score

Some definitions:

Volatility %

This SERPWoo metric explains how many movements in rankings there are on page 1 for a specific keyword. The volatility % will usually be higher for very competitive keywords, where many players battle every day to get to the top. On the other hand, the Volatility % will be low when there is no focus. It could also be that Volatility % is low due to the strength of the competition. To read more about how Volatility % is calculated read this explanation.

We use 30 days of data to calculate Volatility %.

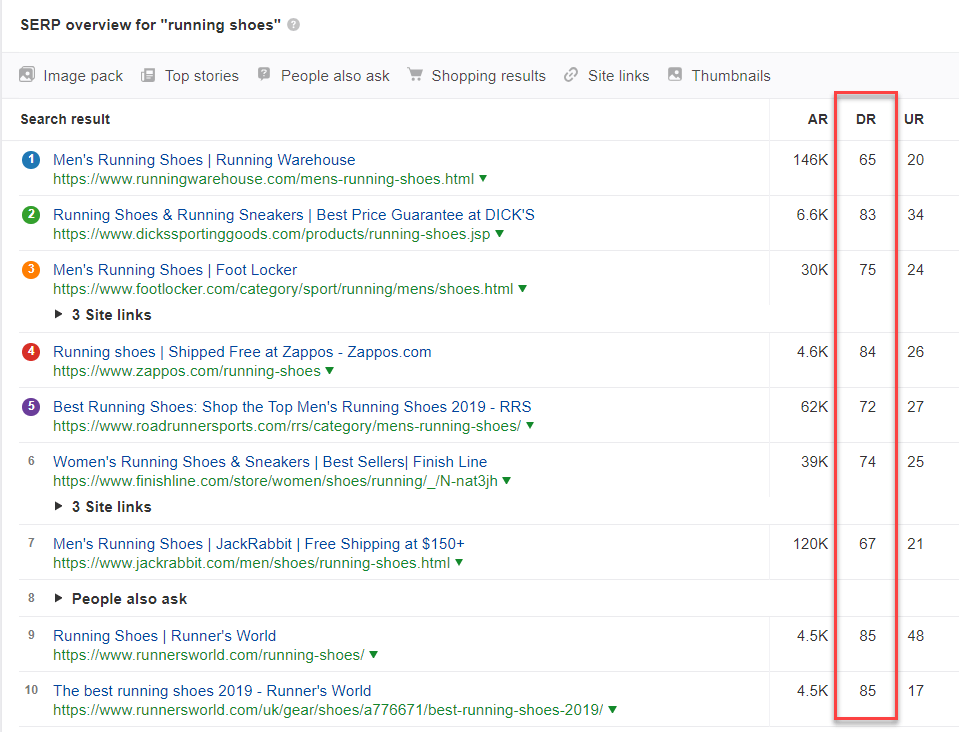

Ahrefs DR

DR stands for Domain Rating and is Ahrefs' metric from 0 to 100 to explain, how strong a website is.

Basically, what I want is to know how strong my competitors are. If you use another metric such as SEMRush's Authority Score or Moz' Domain Authority that is also fine.

HOW TO INTERPRET THE GOOGLE VOLATILITY SCORE?

The Google Volatility Score is a relative score, which will help us prioritize the keywords.

So, if we have a 30 day volatility % of 20 and an average Domain Rating of 50, then it will give us a Google Volatility Score of 0.2 x 50 = 10.

I have run some client projects by now, and below is the way that I would characterize the scores:

0 - 3.9: This keyword has low competition. We are usually up against competitors with low authority, who do not focus on this keyword. We should, therefore, be able to move quickly up the ranks to page 1 in Google, reach the top 3 and stay there. On-page SEO could be sufficient but add in some link building and you should be fine.

4 - 10: It gets harder. The competitors have a higher authority - it could be the market leaders - and they work actively with SEO. The keywords have a high search volume and are usually in the earlier stages of the customer journey.

We can probably get to page 1 inside a reasonable period (1-3 months), but the top 3 will take us 6-12 months extra to reach, and it will require more than on-page SEO to rank. A bigger link building campaign and building topic clusters will be necessary.

+10: This keyword experiences very high competition due to a high search volume and searches being clearly transactional. Competitors focus on the keyword and work actively to rank higher for it. It is business critical for them. For this type of keywords Google is prone to rank brands, so not only should we focus on SEO, but also about building a brand.

GOOGLE VOLATILITY SCORE EXAMPLE

I would like to show you an example, where keywords originally were selected based on rankings and search volume, but in hindsight, we have used the Google Volatility Score.

This company operates in the cybersecurity industry. They challenge the established brands, so they are the underdog within Google and their industry. It would, therefore, be very interesting for them to understand, where they can get the quickest results.

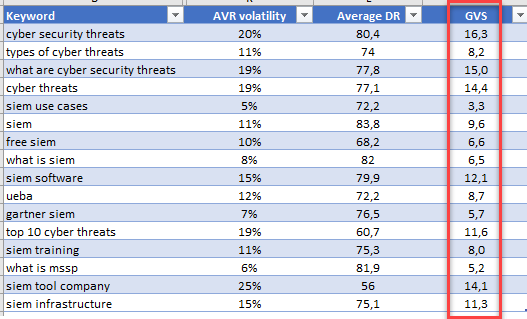

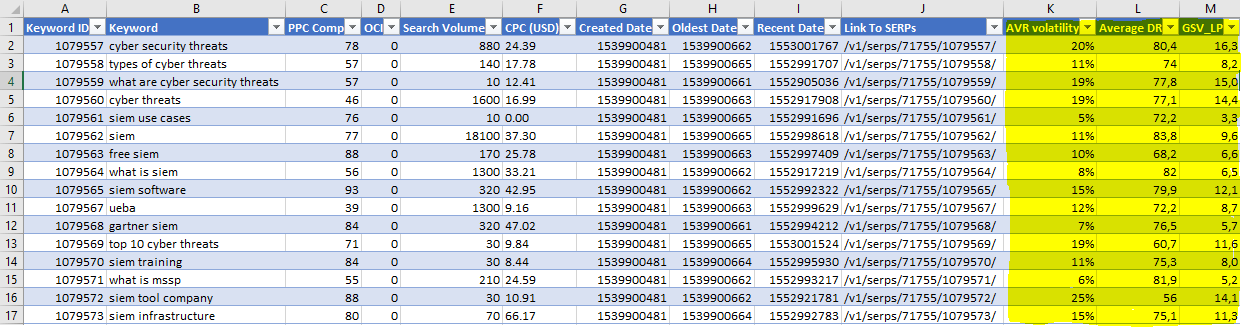

Here are their scores (Google Volatility Score is the last column):

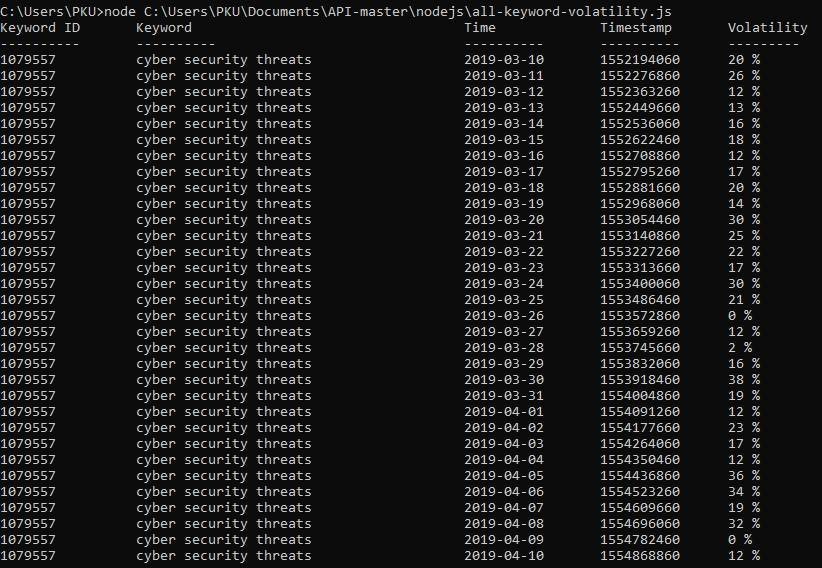

As you can see the scores range from 3.3. to 16.3. There are super hard keywords to rank for such as cybersecurity threats. And you can not expect to get a steady source of traffic from this keyword.

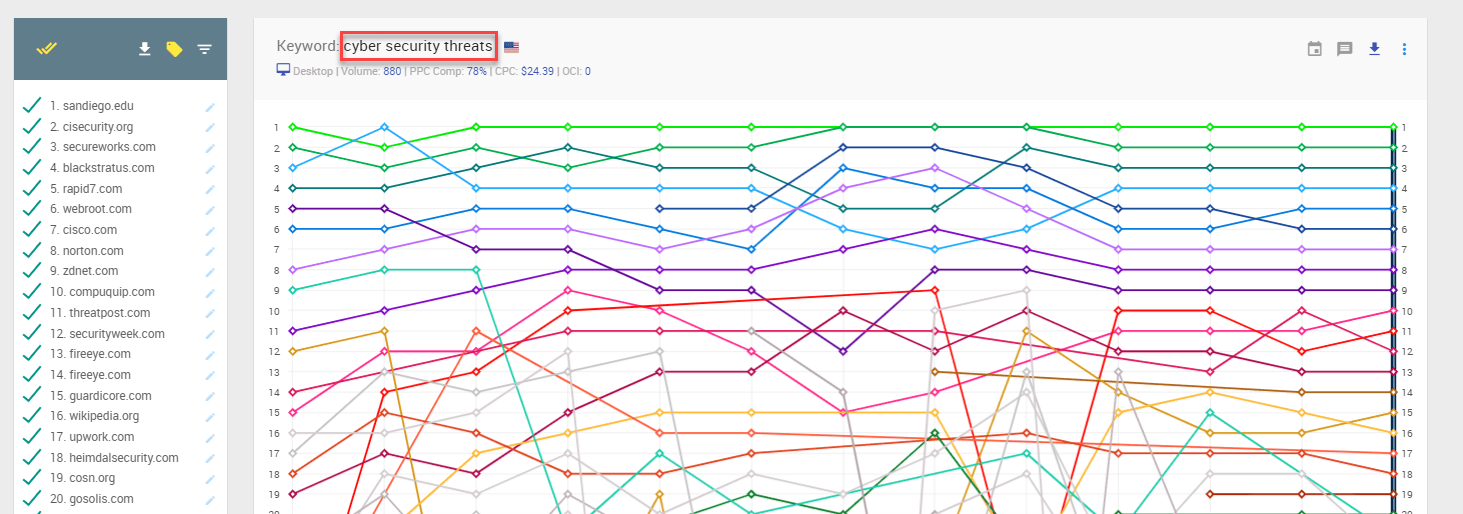

Check the massive fluctuations below for a 30-days period:

Most of the keywords in the industry are difficult to rank for. We did not prioritize URLs but optimized all the keywords at the same time originally.

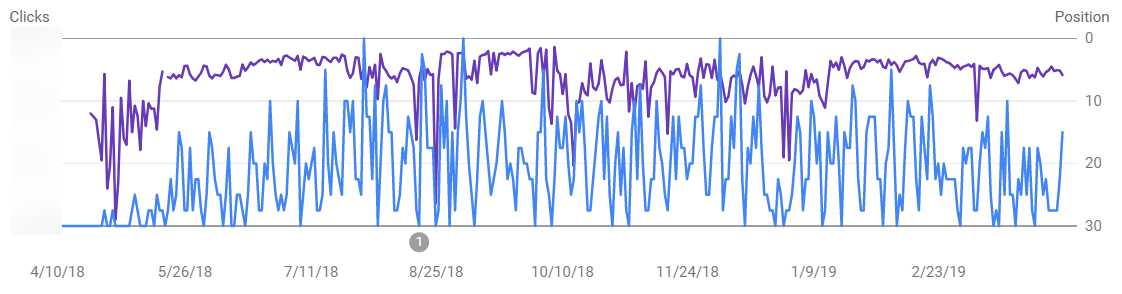

What is interesting here, is that the first keyword to break into the top 5 for us in Google US was "SIEM use cases", which also has the lowest Google Volatility Score.

Below is a screenshot from Google Search Console for the keyword displaying the ranking over the last 12 months (purple line), and the daily organic traffic coming from the keyword (blue line).

Notice how we manage very quickly to move the keyword into page 1, and how steady the ranking and traffic flow are during the subsequent 11 months.

This is the ideal trajectory we seek when doing SEO.

For some of the keywords with the highest Google Volatility Score we never even made it into the top 30, even though we put as much effort into those keywords - like the ones with a lower score.

So how could we have used the Google Volatility Score from the outset saving us a lot of time?

Here is what we learned:

1. We would have known that the keyword "SIEM use cases" was THE opportunity to break into page 1 in Google in an otherwise very competitive environment and obtain steady organic traffic.

2. We would have learned that non-transactional definitions such as What is MSSP are hard to rank for. You are up against the likes of Wikipedia. No fun.

3. We would know that this is a project which would take many months to break into page 1 for the keywords with a higher Google Volatility Score and many more months to get into the top 3. Sure it can pay off in the end, but you need to prepare your business case.

How to execute using the Google Volatility Score

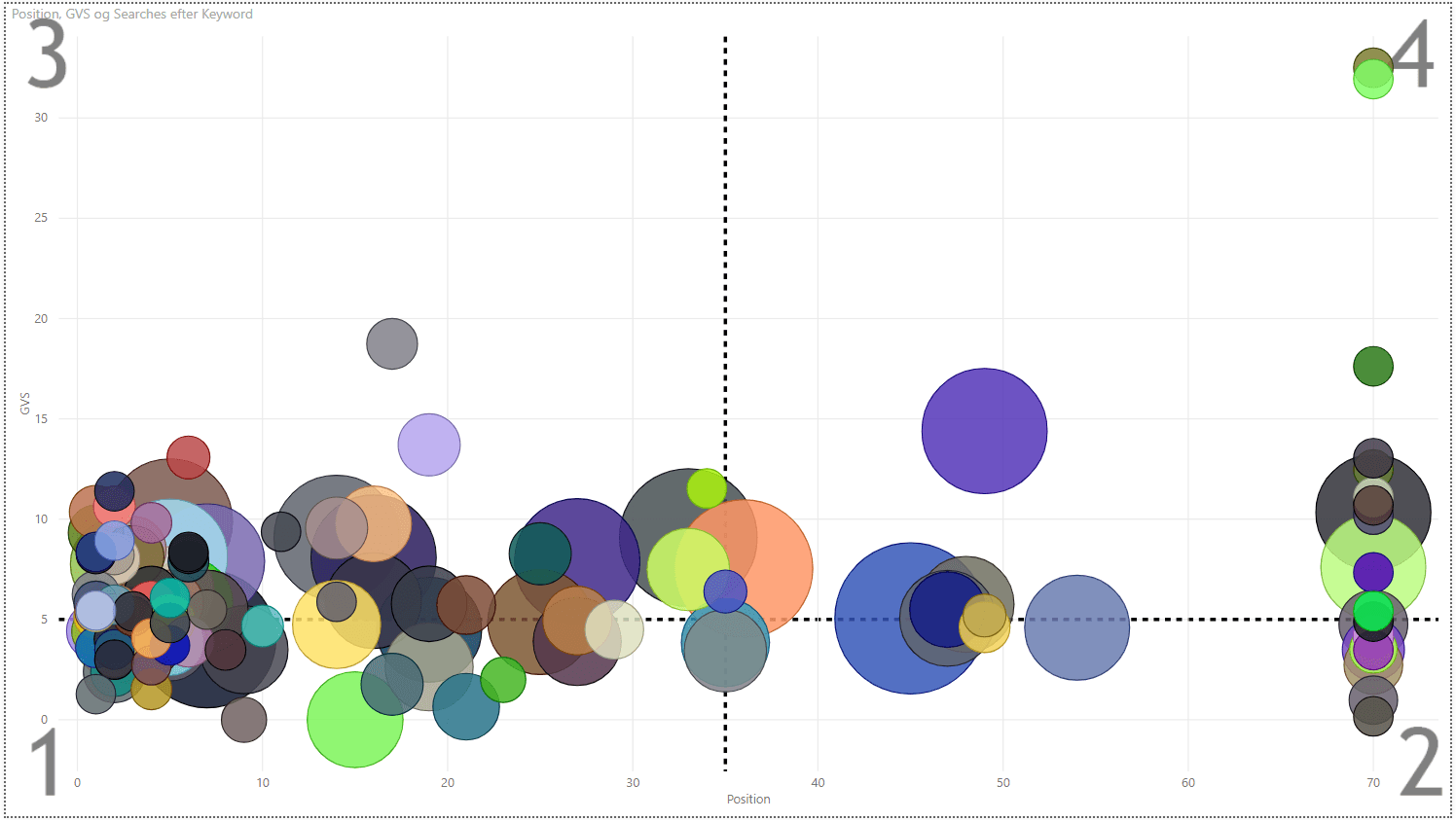

Let us take an example from another client, to show how we approach the execution. Here we have a scatter chart with 135 keywords (the bubbles). On the X-axis we have their Google ranking from left to right, and on the Y-axis we have their Google Volatility Score. The size of the bubbles represents the search volume.

I have divided the chart into 4 areas from 1 to 4. Setting aside any other possible metrics we want to target section 1 first.

Here we find the low hanging fruit. We already rank well, and the Google Volatility Score is low.

Afterward, we move on to section 2. Here we have keywords with a low volatility score, where we need to either build the content or repurpose it. If we manage to do so, we should be able to move up in rankings pretty quickly.

In Section 3 we have keywords, where we already rank on the first pages in Google, but the competition is more intense. We want to go for the lowest bubbles first in this section.

Finally, in section 4 here are the keywords, which we could have optimized first without the Google Volatility Score. Now we have it, so we know that these are the last ones to optimize for. We might even avoid them if they require that we have a known brand.

Long read, but you stayed to the end. Thanks. However we are not done.

Are you excited about the Google Volatility Score and want to try it out?

Here are the steps to create your own:

The 3-step guide to calculating the Google Volatility Score for your keywords

In the following, I will walk you through the step-by-step guide to obtain the Google Volatility Score for your keywords.

Calculating the Google Volatility Score is divided into 3 steps:

- Get the Volatility % via an API call to SERPWoo.

- Extract the average Domain Rating in Ahrefs for the top 10 URLs for each keyword.

- Merge the two datasets.

I use the tools and scripts below to identify the Google Volatility Score. Ahrefs is the only paid tool (besides your SERPWoo subscription), but as I explained earlier that you can use any other similar tool to get a useful score. SeoTools for Excel has a free 14 days trial to test it out (anyway, it should be part of any SEO's toolkit).

- SERPWoo API

- Javascript

- Node.js

- Excel

- SeoTools for Excel

- Ahrefs

STEP 1: Call the Volatility % in SERPWoo

A. Get your API key via your SERPWoo account.

B. Install Node.js.

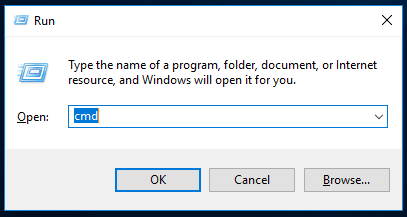

You can download Node.js here. Test Node.js in your Windows command (WIN+r, type in "cmd").

Afterward type in node-v in your command line and the version installed will be displayed. See example below:

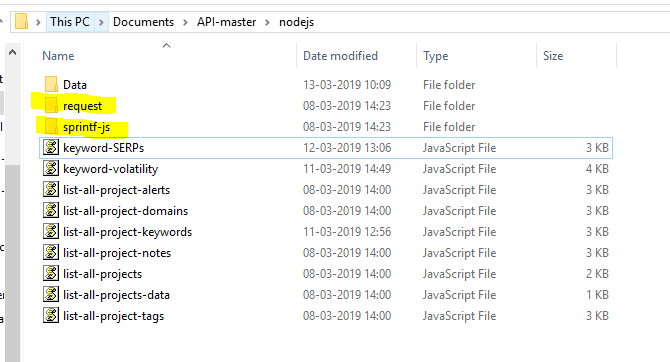

C. Download SERPWoo's API folder from Github (Create an account at Github, and download the SERPWoo API).

D. Use the following script for your keywords.

In the original SERPWoo volatility script you can only make a call to get the volatility % of a single keyword. However, we have developed a script to make an API call for all the keywords in your project.

Script for one keywordIf you only want to test and call one single keyword, then you go to the downloaded folder, and choose the subfolder "nodejs". Here you will find the script keyword-volatility. This script will call the Volatility % for one specific chosen keyword from a project.

However if you want to run a list of keywords from a project then open the script in your programme (E.g. "Visual Studio Code").

Here is the script:

var request = require("request");

var sprintf = require("sprintf-js").sprintf;

// Get your API Key here: https://www.serpwoo.com/v3/api/ (should be logged in)

var API_key = "<YOUR API KEY>";

var Project_ID = <YOUR PROJECT ID>;

var KEYWORDS_LIST = [];

var urlproject = "https://api.serpwoo.com/v1/projects/" + Project_ID + "/keywords/?key=" + API_key

request({

url: urlproject,

json: true

}, function (error, response, JsonData) {

if (!error && response.statusCode === 200) {

if (JsonData.success === 1) {

for(var project_id in JsonData.projects) {

for(var id in JsonData.projects[project_id]['keywords']) {

KEYWORDS_LIST.push(id)

}

}

loop_through_list(KEYWORDS_LIST);

}else {

console.log("Something went wrong: ", JsonData.error);

}

}

});

async function loop_through_list(KEYWORDS_LIST) {

for (var id_of_keyword in KEYWORDS_LIST) {

Keyword_ID = KEYWORDS_LIST[id_of_keyword];

call_api(Keyword_ID);

await sleep(3000); //If you set something slower this will cause some text output to not be read correctly

}

console.log("--[End]");

}

//API call function

console.log(sprintf("%-16s %-40s %-16s %-15s %-10s", 'Keyword ID', 'Keyword', 'Time', 'Timestamp', 'Volatility'));

console.log(sprintf("%-16s %-40s %-16s %-15s %-10s", '----------', '----------', '----------', '----------', '---------'));

function call_api(the_keyword_id = 0){

if (the_keyword_id == 0) { return; }

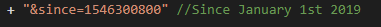

var url = "https://api.serpwoo.com/v1/volatility/" + Project_ID + "/" + Keyword_ID + "/?key=" + API_key + "&since=1546300800" + "&metadata=1" //Since January 1st 2019

request({

url: url,

json: true

}, function (error, response, JsonData) {

if (!error && response.statusCode === 200) {

if (JsonData.success === 1) {

for(var project_id in JsonData) {

for(var keyword_id in JsonData[project_id]) {

if (keyword_id == Keyword_ID) {

for (var timestamp in JsonData[project_id][keyword_id]) {

for (var volatility in JsonData[project_id][keyword_id][timestamp]) {

console.log(sprintf("%-16s %-40s %-16s %-15s %-10s", keyword_id, JsonData["meta"][project_id]["keyword"][keyword_id]["kw"], formatDate(timestamp), timestamp, JsonData[project_id][keyword_id][timestamp].volatility + ' %'));

}

}

}

}

}

//console.log("\n");

}else {

console.log("Something went wrong: ", JsonData.error);

}

}

});

}

// Formating Date

function formatDate(date) {

var d = new Date(date * 1000), month = '' + (d.getMonth() + 1), day = '' + d.getDate(), year = d.getFullYear();

if (month.length < 2) month = '0' + month;

if (day.length < 2) day = '0' + day;

return [year, month, day].join('-');

}

//Sleep

function sleep(ms) {

return new Promise(resolve => setTimeout(resolve, ms));

}

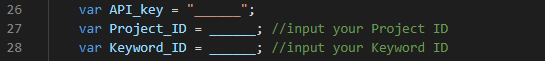

E. Add API-KEY and PROJECT ID (and Keyword ID if using single keyword script) in your chosen script.

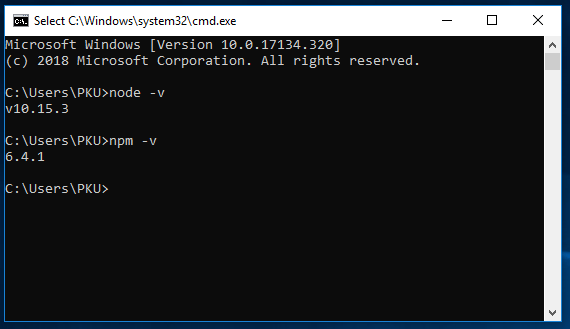

In the script, you add your generated API key and the Project_ID (and Keyword_ID if using single keyword script). The API key is generated from your SERPWoo account. You find the Project_ID and the Keyword_ID in the link when clicking on a keyword in your project.

See below:

Afterward you insert the following three elements in the script. If you are using the script for a single keyword, you insert the Keyword_ID. For more than one keyword you insert the API_key and the Project_ID in the developed script (above sample code). Remember to save your .JS.

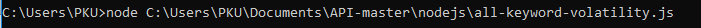

Go back to your command from step B, and write: node + file path. See the example below:

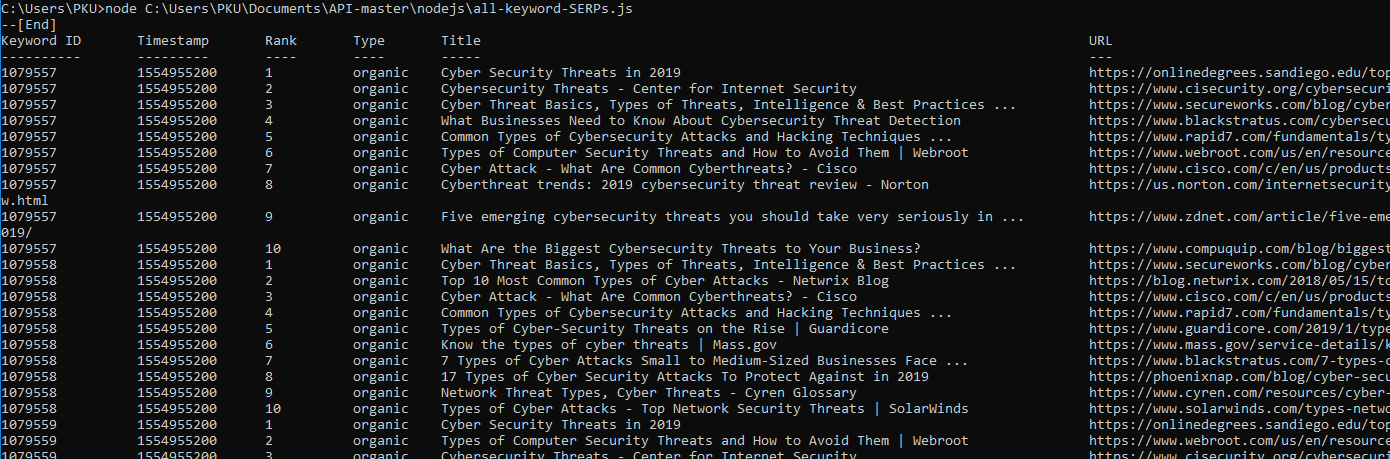

Here is an example of the output. You copy/paste the result into Excel:

If you receive an error in this phase, there can be multiple reasons. Here are the typical ones:

- "error request"

- "error sprint-js"

In the case of one of the above errors - find the respective folder on your computer. Once you find it/them, copy them into the same folder as the script. The folder is called "nodejs".

F. We collect 30 days of data.

As a default, the script calls the API data from 01/01-19, but if you want to find the Google Volatility Score from another specific date, then you can change this in the URL. "1546300800" is the timestamp for 01/01-19. If you are interested in other timestamps, they can be generated via timestampgenerator.com.

STEP 2: Make an API call to get the Domain Rating from Ahrefs for the Top 10 URLs per keyword

The following SERPWoo script calls the URLs for a single keyword. We have edited the script, so it calls the Top 10 URLs for all the keywords in a project.

var request = require("request");

var sprintf = require("sprintf-js").sprintf;

// Get your API Key here: https://www.serpwoo.com/v3/api/ (should be logged in)

var API_key = "<YOUR API KEY>";

var Project_ID = <YOUR PROJECT ID>;

// Here is the list of keyword_ids we want to query

var KEYWORDS_LIST = [];

var urlproject = "https://api.serpwoo.com/v1/projects/" + Project_ID + "/keywords/?key=" + API_key

request({

url: urlproject,

json: true

}, function (error, response, JsonData) {

if (!error && response.statusCode === 200) {

if (JsonData.success === 1) {

for(var project_id in JsonData.projects) {

for(var id in JsonData.projects[project_id]['keywords']) {

KEYWORDS_LIST.push(id)

}

}

loop_through_list(KEYWORDS_LIST);

}else {

console.log("Something went wrong: ", JsonData.error);

}

}

});

async function loop_through_list() {

for (var id_of_keyword in KEYWORDS_LIST) {

Keyword_ID = KEYWORDS_LIST[id_of_keyword];

call_api(Keyword_ID);

await sleep(2000); //If you set something slower this will cause some text output to not be read correctly

}

console.log("--[End]");

}

//Run this script

loop_through_list();

console.log(sprintf("%-16s %-15s %-10s %-10s %-80s %-80s", 'Keyword ID', 'Timestamp', 'Rank', 'Type', 'Title', 'URL'));

console.log(sprintf("%-16s %-15s %-10s %-10s %-80s %-80s", '----------', '---------', '----', '----', '-----', '---'));

//API call function

function call_api(the_keyword_id = 0){

if (the_keyword_id == 0) { return; }

var url = "https://api.serpwoo.com/v1/serps/" + Project_ID + "/" + Keyword_ID + "/?key=" + API_key + "&since=1554955200"+ "&range_bottom=10" +"&metadata=1"

request({

url: url,

json: true

}, function (error, response, JsonData) {

if (!error && response.statusCode === 200) {

if (JsonData.success === 1) {

for(var keyword_id in JsonData) {

if (keyword_id == Keyword_ID) {

for (var timestamp in JsonData[keyword_id]) {

for (var JsonField in JsonData[keyword_id][timestamp]) {

if (JsonField == "results") {

for(var rank in JsonData[keyword_id][timestamp]['results']) {

console.log(sprintf("%-16s %-15s %-10s %-10s %-80s %-80s", keyword_id, timestamp, rank, JsonData[keyword_id][timestamp]["results"][rank].type, JsonData[keyword_id][timestamp]["results"][rank].title, JsonData[keyword_id][timestamp]["results"][rank].url));

}

}

}

}

}

}

}else {

console.log("Something went wrong: ", JsonData.error);

}

}

});

}

// Formating Date

function formatDate(date) {

var d = new Date(date * 1000), month = '' + (d.getMonth() + 1), day = '' + d.getDate(), year = d.getFullYear();

if (month.length < 2) month = '0' + month;

if (day.length < 2) day = '0' + day;

return [year, month, day].join('-');

}

//Sleep

function sleep(ms) {

return new Promise(resolve => setTimeout(resolve, ms));

}

Make sure to add today's timestamp to get the newest ranking if you are interested in the current date for the Top 10 URLs in Google (Or yesterday if today's data is not proceeding yet). Otherwise, the 10 top URLs will be based on the rankings from the 01/01-19.

Afterward you copy the output into the same Excel file as the Volatility % in a new tab.

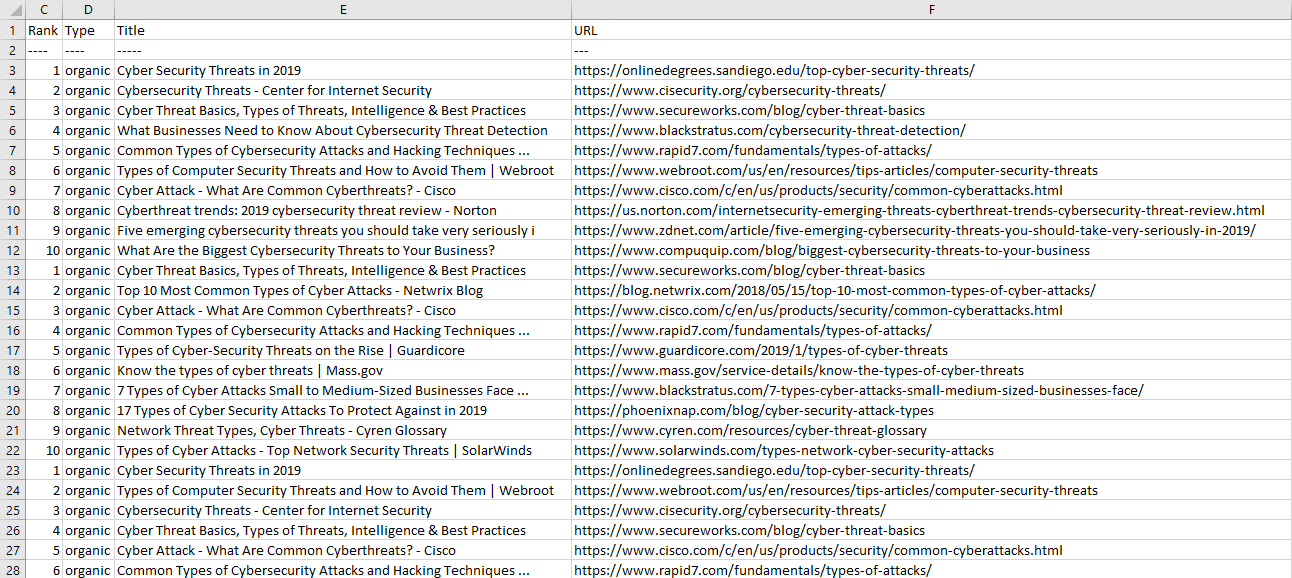

Look at the example below:

STEP 3: Merge the two data sets to get your Google Volatility Score

In the last step, we will merge the first two data sets – Volatility % and Domain Rating.

Here are the different steps:

Copy the Volatility % data is into an Excel sheet. The pasted data will be in column A. Mark column A and click on Data -> Text to Columns to divide the data into 5 columns.

See below:

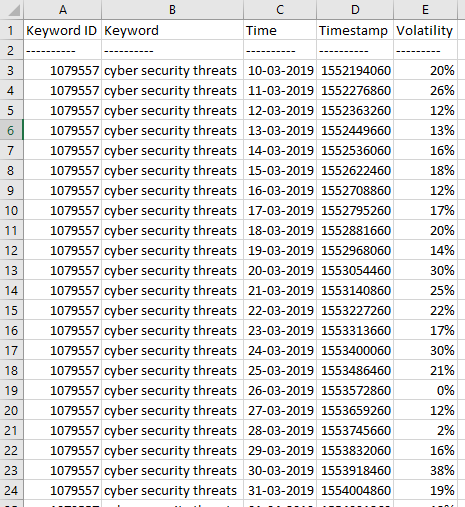

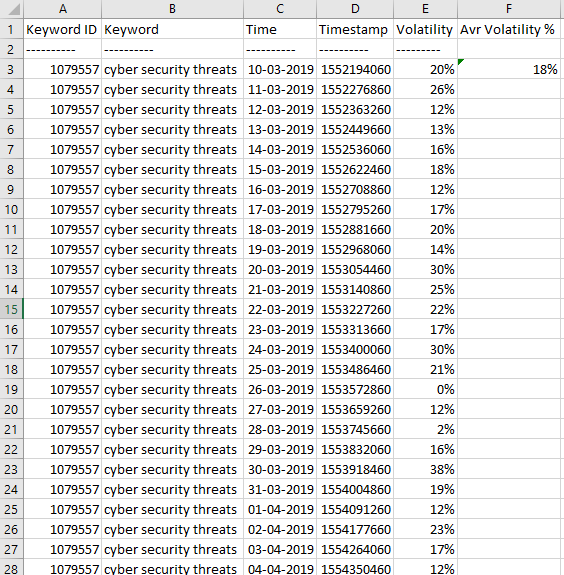

- Afterward you'll find the average of the Volatility % for each keyword.

Look at the example below:

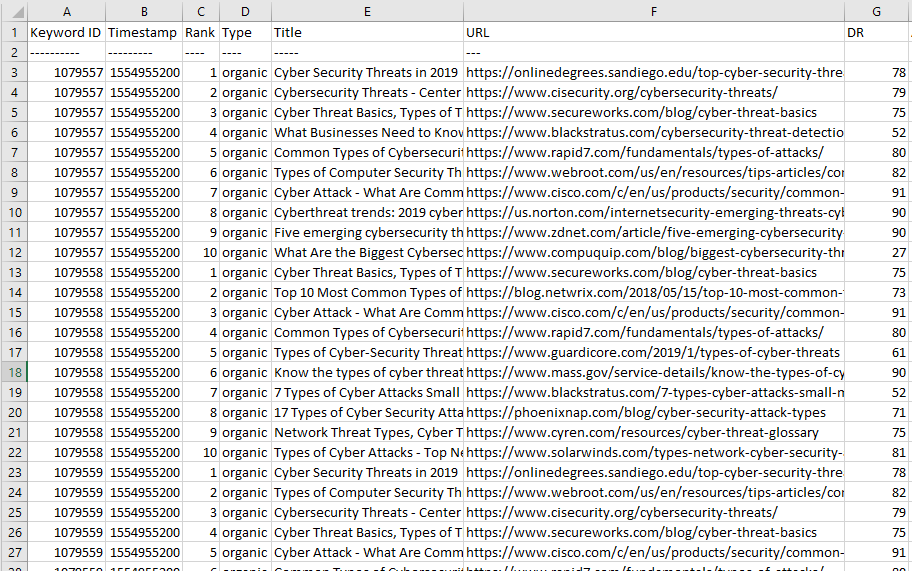

The next step is to clean the data for the keywords in the Domain Rating tab, in order to make it possible to find the average Domain Rating for the top 10 URLs per keyword.

Copy all data from the command into its own sheet, but in the same file as Volatility %. The pasted data will be in the column A. Mark column A, and click on Data -> Text to Columns to divide the data into 4 columns.

See the example below:

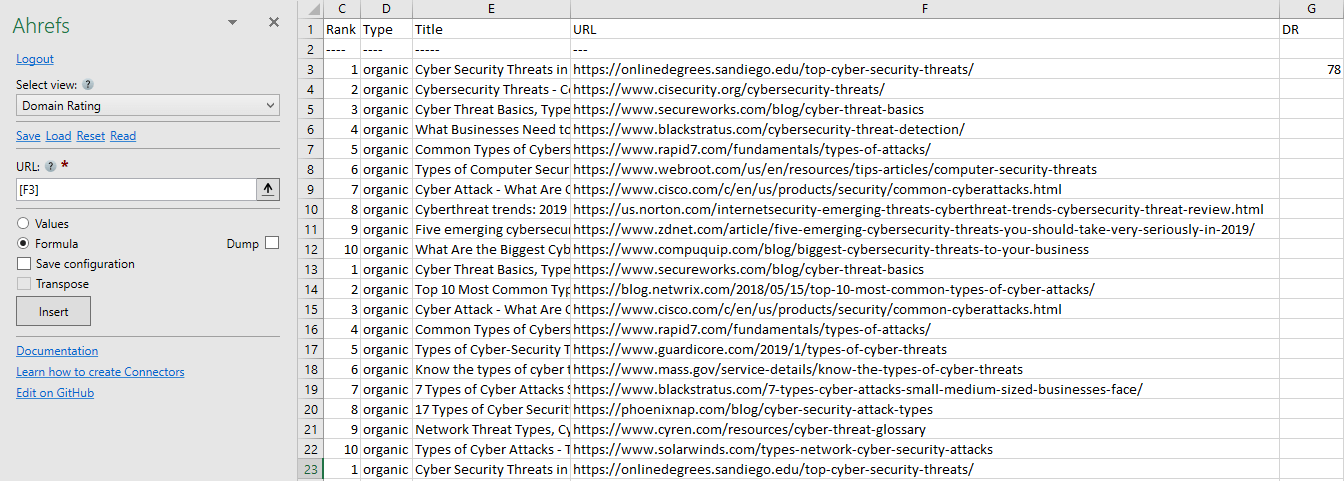

- Afterward you want to find the AHREFs Domain Rating Via SeoTools for Excel. SeoTools is a tool, which requires login. This tool helps you to import the data from the different SEO tools directly into Excel.

- To get a URLs Domain Rating, we use the Ahrefs connector. To retrieve the data from Ahrefs, you must generate an API key.

- After logging in and entering the Ahrefs API key is entered, then do the following:

Insert the URL field (here [F3]) for each specific URL. Afterward you click "Insert", You simply drag down the DR column to get the Domain rating for all your URLs.

See example below:

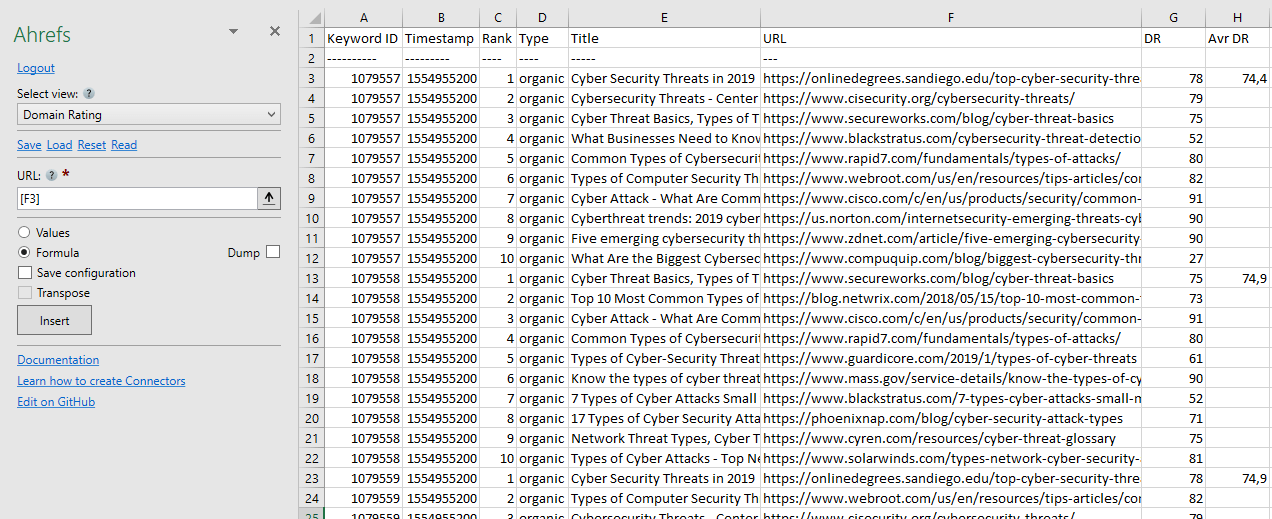

Afterward you calculate the average Domain Rating for each keyword.

Finally, you have the Volatility % and Domain Rating for each keyword. Put them together in a table and multiply them to get the Google Volatility Score.

See the example below:

CONCLUSION

The Google Volatility Score is a practical method for us to solve a concrete challenge:

"How do we spend our resources in the best way possible to get traffic via our most important keywords?"

It is possible that you do not make it to the end of your URL mapping, however, this is a way to start out with the keywords with the highest potential.

A very high Google Volatility Score does not necessarily mean that we will not make an effort to rank the keyword. But it does mean that our timeline expectations extend, and we will calculate a more precise business case when investing our time.

Similar Posts On SERPWoo

- SERPWoo SEO API + Google Sheets = Winning!

- Volatility, The New SEO Metric For Monitoring Fluctuations